Deploy Genesys Voice Platform

Contents

Learn how to deploy Genesys Voice Platform.

Deploy in OpenShift

Prerequisites

- Consul with Service Mesh and DNS

- Availability of shared Postgres for GVP Configuration Server

- Availability of SQLServer Database for Reporting Server

- DB should be created in advance (Example DB Name: gvp_rs)

- There is a requirement for one user to have admin (dbo) access and a second with read only (ro) access

- These credentials are used for creation of Reporting Server secrets.

Environment setup

- Log in to the OpenShift cluster from the remote host via CLI

oc login --token <token> --server <URL of the API server> |

- Check the cluster version

oc get clusterversion |

- Create gvp project in OpenShift cluster

oc new-project gvp |

- Set default project to GVP

oc project gvp |

- Bind SCC to genesys user using default service account

oc adm policy add-scc-to-user genesys-restricted -z default -n gvp |

- Create secret for docker-registry in order to pull image from JFrog

oc create secret docker-registry <credential-name> --docker-server=<docker repo> --docker-username=<username> --docker-password=<API key from jfrog> --docker-email=<emailid> |

- Link the secret to default service account with pull role

oc secrets link default <credential-name> --for=pull |

Installation order matters with GVP. To deploy without errors, the install order should be:

Helm chart release URLs

Download the GVP Helm charts from JFrog using your credentials:

gvp-configserver : https://<jfrog artifactory/helm location>/gvp-configserver-100.0.1000017.tgz

gvp-sd : https://<jfrog artifactory/helm location>/gvp-sd-100.0.1000019.tgz

gvp-rs : https://<jfrog artifactory/helm location>/gvp-rs-100.0.1000077.tgz

gvp-rm : https://<jfrog artifactory/helm location>/gvp-rm-100.0.1000082.tgz

gvp-mcp : https://<jfrog artifactory/helm location>/gvp-mcp-100.0.1000040.tgz

1. GVP Configuration Server

Secrets creation

Create the following secrets which are required for the service deployment.

postgres-secret

db-hostname: Hostname of DB Server

db-name: Database Name

db-password: password for db user

db-username: username for db

server-name: Hostname of DB Server

apiVersion: v1 kind: Secret metadata: name: postgres-secret namespace: gvp type: Opaque data: db-username: <base64 encoded value> db-password: <base64 encoded value> db-hostname: cG9zdGdyZXMtcncuaW5mcmEuc3ZjLmNsdXN0ZXIubG9jYWw= db-name: Z3Zw server-name: cG9zdGdyZXMtcncuaW5mcmEuc3ZjLmNsdXN0ZXIubG9jYWw= |

oc create -f postgres-secret.yaml |

password: Password to set for Config DB

username: Username to set for Config DB

apiVersion: v1 kind: Secret metadata: name: configserver-secret namespace: gvp type: Opaque data: username: <base64 encoded value> password: <base64 encoded value> |

oc create -f configserver-secret.yaml |

Install Helm chart

Download the required Helm chart release from the JFrog repository and install. Refer to Helm Chart URLs.

helm install gvp-configserver ./<gvp-configserver-helm-artifact> -f gvp-configserver-values.yaml |

- <critical-priority-class> - Set to a priority class that exists on cluster (or create it instead)

- <docker-repo> - Set to your Docker Repo with Private Edition Artifacts

- <credential-name> - Set to your pull secret name

# Default values for gvp-configserver.

# This is a YAML-formatted file.

# Declare variables to be passed into your templates.

## Global Parameters

## Add labels to all the deployed resources

##

podLabels: {}

## Add annotations to all the deployed resources

##

podAnnotations: {}

serviceAccount:

# Specifies whether a service account should be created

create: false

# Annotations to add to the service account

annotations: {}

# The name of the service account to use.

# If not set and create is true, a name is generated using the fullname template

name:

## Deployment Configuration

## replicaCount should be 1 for Config Server

replicaCount: 1

## Base Labels. Please do not change these.

serviceName: gvp-configserver

component: shared

# Namespace

partOf: gvp

## Container image repo settings.

image:

confserv:

registry: <docker-repo>

repository: gvp/gvp_confserv

pullPolicy: IfNotPresent

tag: "{{ .Chart.AppVersion }}"

serviceHandler:

registry: <docker-repo>

repository: gvp/gvp_configserver_servicehandler

pullPolicy: IfNotPresent

tag: "{{ .Chart.AppVersion }}"

dbInit:

registry: <docker-repo>

repository: gvp/gvp_configserver_configserverinit

pullPolicy: IfNotPresent

tag: "{{ .Chart.AppVersion }}"

## Config Server App Configuration

configserver:

## Settings for liveness and readiness probes

## !!! THESE VALUES SHOULD NOT BE CHANGED UNLESS INSTRUCTED BY GENESYS !!!

livenessValues:

path: /cs/liveness

initialDelaySeconds: 30

periodSeconds: 60

timeoutSeconds: 20

failureThreshold: 3

healthCheckAPIPort: 8300

readinessValues:

path: /cs/readiness

initialDelaySeconds: 30

periodSeconds: 30

timeoutSeconds: 20

failureThreshold: 3

healthCheckAPIPort: 8300

alerts:

cpuUtilizationAlertLimit: 70

memUtilizationAlertLimit: 90

workingMemAlertLimit: 7

maxRestarts: 2

## PVCs defined

# none

## Define service(s) for application

service:

type: ClusterIP

host: gvp-configserver-0

port: 8888

targetPort: 8888

## Service Handler configuration.

serviceHandler:

port: 8300

## Secrets storage related settings - k8s secrets only

secrets:

# Used for pulling images/containers from the repositories.

imagePull:

- name: <credential-name>

# Config Server secrets. If k8s is false, csi will be used, else k8s will be used.

# Currently, only k8s is supported!

configServer:

secretName: configserver-secret

secretUserKey: username

secretPwdKey: password

#csiSecretProviderClass: keyvault-gvp-gvp-configserver-secret

# Config Server Postgres DB secrets and settings.

postgres:

dbName: gvp

dbPort: 5432

secretName: postgres-secret

secretAdminUserKey: db-username

secretAdminPwdKey: db-password

secretHostnameKey: db-hostname

secretDbNameKey: db-name

#secretServerNameKey: server-name

## Ingress configuration

ingress:

enabled: false

annotations: {}

# kubernetes.io/ingress.class: nginx

# kubernetes.io/tls-acme: "true"

hosts:

- host: chart-example.local

paths: []

tls: []

# - secretName: chart-example-tls

# hosts:

# - chart-example.local

## App resource requests and limits

## ref: http://kubernetes.io/docs/user-guide/compute-resources/

##

resources:

requests:

memory: "512Mi"

cpu: "500m"

limits:

memory: "1Gi"

cpu: "1"

## App containers' Security Context

## ref: https://kubernetes.io/docs/tasks/configure-pod-container/security-context/#set-the-security-context-for-a-container

##

## Containers should run as genesys user and cannot use elevated permissions

##

securityContext:

runAsUser: 500

runAsGroup: 500

# capabilities:

# drop:

# - ALL

# readOnlyRootFilesystem: true

# runAsNonRoot: true

# runAsUser: 1000

podSecurityContext: {}

# fsGroup: 2000

## Priority Class

## ref: https://kubernetes.io/docs/concepts/configuration/pod-priority-preemption/

## NOTE: this is an optional parameter

##

priorityClassName: <critical-priority-class>

## Affinity for assignment.

## Ref: https://kubernetes.io/docs/concepts/configuration/assign-pod-node/#affinity-and-anti-affinity

##

affinity: {}

## Node labels for assignment.

## ref: https://kubernetes.io/docs/user-guide/node-selection/

##

nodeSelector: {}

## Tolerations for assignment.

## ref: https://kubernetes.io/docs/concepts/configuration/taint-and-toleration/

##

tolerations: []

## Service/Pod Monitoring Settings

## Whether to create Prometheus alert rules or not.

prometheusRule:

create: true

## Grafana dashboard Settings

## Whether to create Grafana dashboard or not.

grafana:

enabled: true

## Enable network policies or not

networkPolicies:

enabled: false

## DNS configuration options

dnsConfig:

options:

- name: ndots

value: "3"

|

Verify the deployed resources

Verify the deployed resources from OpenShift console/CLI.

2. GVP Service Discovery

NOTE: After GVP-SD pod gets deployed, you will notice a few errors. Please ignore them and move on to next deployment. This will start working once RM & MCP are deployed.

Secrets creation

Create the following secrets which are required for the service deployment.

shared-consul-consul-gvp-token

apiVersion: v1 kind: Secret metadata: name: shared-consul-consul-gvp-token namespace: gvp type: Opaque data: consul-consul-gvp-token: ZmU2NjFkNWYtYzVmNi1mZTJlLTgyM2MtYTAyZGQwN2JlMzll |

oc create -f shared-consul-consul-gvp-token-secret.yaml |

ConfigMap creation

Creation of a tenant-inventory ConfigMap is required for service discovery deployment.

If the tenant has not been deployed yet then you will not have the information needed to populate the config map. An empty config-map can be created using:

oc create configmap tenant-inventory -n gvp |

Provisioning a new tenant

Create a file (t100.json in the example) containing at minimum: name, id, gws-ccid, and default-application (should be set to IVRAppDefault) from your tenant deployment.

{

"name": "t100",

"id": "80dd",

"gws-ccid": "9350e2fc-a1dd-4c65-8d40-1f75a2e080dd",

"default-application": "IVRAppDefault"

}

|

oc create configmap tenant-inventory --from-file t100.json -n gvp |

Updating a tenant

Delete the tenant-inventory ConfigMap using:

oc delete configmap tenant-inventory -n gvp --ignore-not-found |

oc create configmap tenant-inventory --from-file t100.json -n gvp |

Provisioning process details

For additional details on the provisioning process, refer to Provision Genesys Voice Platform.

Install Helm chart

Download the required Helm chart release from the JFrog repository and install. Refer to Helm Chart URLs.

helm install gvp-sd ./<gvp-sd-helm-artifact> -f gvp-sd-values.yaml |

- <critical-priority-class> - Set to a priority class that exists on cluster (or create it instead)

- <docker-repo> - Set to your Docker Repo with Private Edition Artifacts

- <credential-name> - Set to your pull secret name

# Default values for gvp-sd.

# This is a YAML-formatted file.

# Declare variables to be passed into your templates.

## Global Parameters

## Add labels to all the deployed resources

##

podLabels: {}

## Add annotations to all the deployed resources

##

podAnnotations: {}

serviceAccount:

# Specifies whether a service account should be created

create: false

# Annotations to add to the service account

annotations: {}

# The name of the service account to use.

# If not set and create is true, a name is generated using the fullname template

name:

## Deployment Configuration

replicaCount: 1

smtp: allowed

## Name overrides

nameOverride: ""

fullnameOverride: ""

## Base Labels. Please do not change these.

component: shared

partOf: gvp

image:

registry: <docker-repo>

repository: gvp/gvp_sd

tag: "{{ .Chart.AppVersion }}"

pullPolicy: IfNotPresent

## PVCs defined

# none

## Define service for application.

service:

name: gvp-sd

type: ClusterIP

port: 8080

## Application configuration parameters.

env:

MCP_SVC_NAME: "gvp-mcp"

EXTERNAL_CONSUL_SERVER: ""

CONSUL_PORT: "8501"

CONFIG_SERVER_HOST: "gvp-configserver"

CONFIG_SERVER_PORT: "8888"

CONFIG_SERVER_APP: "default"

HTTP_SERVER_PORT: "8080"

METRICS_EXPORTER_PORT: "9090"

DEF_MCP_FOLDER: "MCP_Configuration_Unit\\MCP_LRG"

TEST_MCP_FOLDER: "MCP_Configuration_Unit_Test\\MCP_LRG"

SYNC_INIT_DELAY: "10000"

SYNC_PERIOD: "60000"

MCP_PURGE_PERIOD_MINS: "0"

EMAIL_METERING_FACTOR: "10"

RECORDINGS_CONTAINER: "ccerp-recordings"

TENANT_KV_FOLDER: "tenants"

TENANT_CONFIGMAP_FOLDER: "/etc/config"

SMTP_SERVER: "smtp-relay.smtp.svc.cluster.local"

## Secrets storage related settings

secrets:

# Used for pulling images/containers from the repositories.

imagePull:

- name: <credential-name>

# If k8s is true, k8s will be used, else vault secret will be used.

configServer:

k8s: true

k8sSecretName: configserver-secret

k8sUserKey: username

k8sPasswordKey: password

vaultSecretName: "/configserver-secret"

vaultUserKey: "configserver-username"

vaultPasswordKey: "configserver-password"

# If k8s is true, k8s will be used, else vault secret will be used.

consul:

k8s: true

k8sTokenName: "shared-consul-consul-gvp-token"

k8sTokenKey: "consul-consul-gvp-token"

vaultSecretName: "/consul-secret"

vaultSecretKey: "consul-consul-gvp-token"

# GTTS key, password via k8s secret, if k8s is true. If false, this data comes from tenant profile.

gtts:

k8s: false

k8sSecretName: gtts-secret

EncryptedKey: encrypted-key

PasswordKey: password

ingress:

enabled: false

annotations: {}

# kubernetes.io/ingress.class: nginx

# kubernetes.io/tls-acme: "true"

hosts:

- host: chart-example.local

paths: []

tls: []

# - secretName: chart-example-tls

# hosts:

# - chart-example.local

resources:

requests:

memory: "2Gi"

cpu: "1000m"

limits:

memory: "2Gi"

cpu: "1000m"

## App containers' Security Context

## ref: https://kubernetes.io/docs/tasks/configure-pod-container/security-context/#set-the-security-context-for-a-container

##

## Containers should run as genesys user and cannot use elevated permissions

## Pod level security context

podSecurityContext:

fsGroup: 500

runAsUser: 500

runAsGroup: 500

runAsNonRoot: true

## Container security context

securityContext:

runAsUser: 500

runAsGroup: 500

runAsNonRoot: true

## Priority Class

## ref: https://kubernetes.io/docs/concepts/configuration/pod-priority-preemption/

## NOTE: this is an optional parameter

##

priorityClassName: <critical-priority-class>

## Affinity for assignment.

## Ref: https://kubernetes.io/docs/concepts/configuration/assign-pod-node/#affinity-and-anti-affinity

##

affinity: {}

## Node labels for assignment.

## ref: https://kubernetes.io/docs/user-guide/node-selection/

##

nodeSelector: {}

## Tolerations for assignment.

## ref: https://kubernetes.io/docs/concepts/configuration/taint-and-toleration/

##

tolerations: []

## Service/Pod Monitoring Settings

prometheus:

# Enable for Prometheus operator

podMonitor:

enabled: true

## Enable network policies or not

networkPolicies:

enabled: false

## DNS configuration options

dnsConfig:

options:

- name: ndots

value: "3"

|

Verify the deployed resources

Verify the deployed resources from OpenShift console/CLI.

3. GVP Reporting Server

Secrets creation

Create the following secrets which are required for the service deployment.

rs-dbreader-password

db_hostname:

db_name:

db_password:

db_username:

apiVersion: v1 kind: Secret metadata: name: rs-dbreader-password namespace: gvp type: Opaque data: db_username: <base64 encoded value> db_password: <base64 encoded value> db_hostname: bXNzcWxzZXJ2ZXJvcGVuc2hpZnQuZGF0YWJhc2Uud2luZG93cy5uZXQ= db_name: cnNfZ3Zw |

oc create -f rs-dbreader-password-secret.yaml |

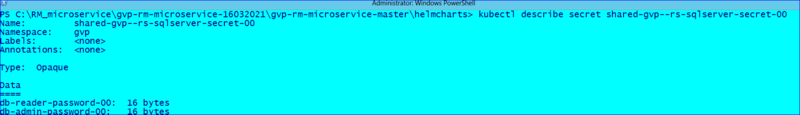

db-admin-password:

db-reader-password:

apiVersion: v1 kind: Secret metadata: name: shared-gvp-rs-sqlserver-secret namespace: gvp type: Opaque data: db-admin-password: <value> db-reader-password: <value> |

oc create -f shared-gvp-rs-sqlserer-secret.yaml |

Persistent Volumes creation

Note: The steps for PV creation can be skipped if OCS is used to auto provision the persistent volumes.

Create the following PVs which are required for the service deployment.

| If your OpenShift deployment is capable of self-provisioning of Persistent Volumes, then this step can be skipped. Volumes will be created by provisioner. |

apiVersion: v1

kind: PersistentVolume

metadata:

name: gvp-rs-0

namespace: gvp

spec:

capacity:

storage: 30Gi

accessModes:

- ReadWriteOnce

persistentVolumeReclaimPolicy: Retain

storageClassName: gvp

nfs:

path: /export/vol1/PAT/gvp/rs-01

server: 192.168.30.51

|

oc create -f gvp-rs-pv.yaml |

Install Helm chart

Download the required Helm chart release from the JFrog repository and install. Refer to Helm Chart URLs.

helm install gvp-rs ./<gvp-rs-helm-artifact> -f gvp-rs-values.yaml |

- <docker-repo> - Set to your Docker Repo with Private Edition Artifacts

- <credential-name> - Set to your pull secret name

## Global Parameters

## Add labels to all the deployed resources

##

labels:

enabled: true

serviceGroup: "gvp"

componentType: "shared"

serviceAccount:

# Specifies whether a service account should be created

create: false

# Annotations to add to the service account

annotations: {}

# The name of the service account to use.

# If not set and create is true, a name is generated using the fullname template

name:

## Primary App Configuration

##

# primaryApp:

# type: ReplicaSet

# Should include the defaults for replicas

deployment:

replicaCount: 1

strategy: Recreate

namespace: gvp

nameOverride: ""

fullnameOverride: ""

image:

registry: <docker-repo>

gvprsrepository: gvp/gvp_rs

snmprepository: gvp/gvp_snmp

rsinitrepository: gvp/gvp_rs_init

rstag:

rsinittag:

snmptag: v9.0.040.07

pullPolicy: Always

imagePullSecrets:

- name: "<credential-name>"

## liveness and readiness probes

## !!! THESE OPTIONS SHOULD NOT BE CHANGED UNLESS INSTRUCTED BY GENESYS !!!

livenessValues:

path: /ems-rs/components

initialDelaySeconds: 30

periodSeconds: 120

timeoutSeconds: 3

failureThreshold: 3

readinessValues:

path: /ems-rs/components

initialDelaySeconds: 10

periodSeconds: 60

timeoutSeconds: 3

failureThreshold: 3

## PVCs defined

volumes:

pvc:

storageClass: managed-premium

claimSize: 20Gi

activemqAndLocalConfigPath: "/billing/gvp-rs"

## Define service(s) for application. Fields many need to be modified based on `type`

service:

type: ClusterIP

restapiport: 8080

activemqport: 61616

envinjectport: 443

dnsport: 53

configserverport: 8888

snmpport: 1705

## ConfigMaps with Configuration

## Use Config Map for creating environment variables

context:

env:

CFGAPP: default

GVP_RS_SERVICE_HOSTNAME: gvp-rs.gvp.svc.cluster.local

#CFGPASSWORD: password

#CFGUSER: default

CFG_HOST: gvp-configserver.gvp.svc.cluster.local

CFG_PORT: '8888'

CMDLINE: ./rs_startup.sh

DBNAME: gvp_rs

#DBPASS: 'jbIKfoS6LpfgaU$E'

DBUSER: openshiftadmin

rsDbSharedUsername: openshiftadmin

DBPORT: 1433

ENVTYPE: ""

GenesysIURegion: ""

localconfigcachepath: /billing/gvp-rs/data/cache

HOSTFOLDER: Hosts

HOSTOS: CFGRedHatLinux

LCAPORT: '4999'

MSSQLHOST: mssqlserveropenshift.database.windows.net

RSAPP: azure_rs

RSJVM_INITIALHEAPSIZE: 500m

RSJVM_MAXHEAPSIZE: 1536m

RSFOLDER: Applications

RS_VERSION: 9.0.032.22

STDOUT: 'true'

WRKDIR: /usr/local/genesys/rs/

SNMPAPP: azure_rs_snmp

SNMP_WORKDIR: /usr/sbin

SNMP_CMDLINE: snmpd

SNMPFOLDER: Applications

RSCONFIG:

messaging:

activemq.memoryUsageLimit: "256 mb"

activemq.dataDirectory: "/billing/gvp-rs/data/activemq"

log:

verbose: "trace"

trace: "stdout"

dbmp:

rs.db.retention.operations.daily.default: "40"

rs.db.retention.operations.monthly.default: "40"

rs.db.retention.operations.weekly.default: "40"

rs.db.retention.var.daily.default: "40"

rs.db.retention.var.monthly.default: "40"

rs.db.retention.var.weekly.default: "40"

rs.db.retention.cdr.default: "40"

# Default secrets storage to k8s secrets with csi able to be optional

secret:

# keyVaultSecret will be a flag to between secret types(k8's or CSI). If keyVaultSecret was set to false k8's secret will be used

keyVaultSecret: false

#RS SQL server secret

rsSecretName: shared-gvp-rs-sqlserver-secret

# secretProviderClassName will not be used used when keyVaultSecret set to false

secretProviderClassName: keyvault-gvp-rs-sqlserver-secret-00

dbreadersecretFileName: db-reader-password

dbadminsecretFileName: db-admin-password

#Configserver secret

#If keyVaultSecret set to false the below parameters will not be used.

configserverProviderClassName: gvp-configserver-secret

cfgSecretFileNameForCfgUsername: configserver-username

cfgSecretFileNameForCfgPassword: configserver-password

#If keyVaultSecret set to true the below parameters will not be used.

cfgServerSecretName: configserver-secret

cfgSecretKeyNameForCfgUsername: username

cfgSecretKeyNameForCfgPassword: password

## Ingress configuration

ingress:

enabled: false

annotations: {}

# kubernetes.io/ingress.class: nginx

# kubernetes.io/tls-acme: "true"

hosts:

- host: chart-example.local

paths: []

tls: []

# - secretName: chart-example-tls

# hosts:

# - chart-example.local

networkPolicies:

enabled: false

## primaryAppresource requests and limits

## ref: http://kubernetes.io/docs/user-guide/compute-resources/

##

resourceForRS:

# We usually recommend not to specify default resources and to leave this as a conscious

# choice for the user. This also increases chances charts run on environments with little

# resources, such as Minikube. If you do want to specify resources, uncomment the following

# lines, adjust them as necessary, and remove the curly braces after 'resources:'.

requests:

memory: "500Mi"

cpu: "200m"

limits:

memory: "1Gi"

cpu: "300m"

resoueceForSnmp:

requests:

memory: "500Mi"

cpu: "100m"

limits:

memory: "1Gi"

cpu: "150m"

## primaryApp containers' Security Context

## ref: https://kubernetes.io/docs/tasks/configure-pod-container/security-context/#set-the-security-context-for-a-container

##

## Containers should run as genesys user and cannot use elevated permissions

securityContext:

runAsNonRoot: true

runAsUser: 500

runAsGroup: 500

podSecurityContext:

fsGroup: 500

## Priority Class

## ref: https://kubernetes.io/docs/concepts/configuration/pod-priority-preemption/

##

priorityClassName: ""

## Affinity for assignment.

## Ref: https://kubernetes.io/docs/concepts/configuration/assign-pod-node/#affinity-and-anti-affinity

##

affinity:

## Node labels for assignment.

## ref: https://kubernetes.io/docs/user-guide/node-selection/

##

nodeSelector:

## Tolerations for assignment.

## ref: https://kubernetes.io/docs/concepts/configuration/taint-and-toleration/

##

tolerations: []

## Extra labels

## ref: https://kubernetes.io/docs/concepts/overview/working-with-objects/labels/

##

# labels: {}

## Extra Annotations

## ref: https://kubernetes.io/docs/concepts/overview/working-with-objects/annotations/

##

# annotations: {}

## Service/Pod Monitoring Settings

prometheus:

enabled: true

metric:

port: 9116

# Enable for Prometheus operator

podMonitor:

enabled: true

metric:

path: /snmp

module: [ if_mib ]

target: [ 127.0.0.1:1161 ]

monitoring:

prometheusRulesEnabled: true

grafanaEnabled: true

monitor:

monitorName: gvp-monitoring

##DNS Settings

dnsConfig:

options:

- name: ndots

value: "3"

|

Verify the deployed resources

Verify the deployed resources from OpenShift console/CLI.

Deployment validation - Success

1. Log into console and check if gvp-rs pod is ready and running "oc get pods -o wide".2. Do a pod describe and check if both liveness and Readiness probes are passing "oc describe gvp-rs"3. Check the RS applications and Db details are properly configured in GVP Configuration Server.

4. Check secrets are created in kubernetes cluster.5. Check Db creation and configuration is successful.Deployment validation - Failure

To debug deployment failure, follow the below steps:

1. Log into console and check if gvp-rs pod is ready and running "oc get pods -o wide".

2. If the RS container is continuously restarting, you need to check the liveness and readiness probe status.

3. Do RS pod describe to check the liveness and readiness probe status "oc describe gvp-rs".

4. If probe failures are observed, check if PVC is attached properly and check RS logs if Config data is read properly.

5. If rs-init container is failing, check RS and configserver/DB connectivity.

4. GVP Resource Manager

| Resource Manager will not pass readiness checks until an MCP has registered properly. This is because service is not available without MCPs. |

Persistent Volumes creation

Note: The steps for PV creation can be skipped if OCS is used to auto provision the persistent volumes.

Create the following PVs which are required for the service deployment.

| If your OpenShift deployment is capable of self-provisioning of Persistent Volumes, then this step can be skipped. Volumes will be created by provisioner. |

apiVersion: v1

kind: PersistentVolume

metadata:

name: gvp-rm-01

spec:

capacity:

storage: 30Gi

accessModes:

- ReadWriteOnce

persistentVolumeReclaimPolicy: Retain

storageClassName: gvp

nfs:

path: /export/vol1/PAT/gvp/rm-01

server: 192.168.30.51

|

oc create -f gvp-rm-01-pv.yaml |

apiVersion: v1

kind: PersistentVolume

metadata:

name: gvp-rm-02

spec:

capacity:

storage: 30Gi

accessModes:

- ReadWriteOnce

persistentVolumeReclaimPolicy: Retain

storageClassName: gvp

nfs:

path: /export/vol1/PAT/gvp/rm-02

server: 192.168.30.51

|

oc create -f gvp-rm-02-pv.yaml |

apiVersion: v1

kind: PersistentVolume

metadata:

name: gvp-rm-logs-01

spec:

capacity:

storage: 10Gi

accessModes:

- ReadWriteOnce

persistentVolumeReclaimPolicy: Recycle

storageClassName: gvp

nfs:

path: /export/vol1/PAT/gvp/rm-logs-01

server: 192.168.30.51

|

oc create -f gvp-rm-logs-01-pv.yaml |

apiVersion: v1

kind: PersistentVolume

metadata:

name: gvp-rm-logs-02

spec:

capacity:

storage: 10Gi

accessModes:

- ReadWriteOnce

persistentVolumeReclaimPolicy: Recycle

storageClassName: gvp

nfs:

path: /export/vol1/PAT/gvp/rm-logs-02

server: 192.168.30.51

|

oc create -f gvp-rm-logs-02-pv.yaml |

Install Helm chart

Download the required Helm chart release from the JFrog repository and install. Refer to Helm Chart URLs.

helm install gvp-rm ./<gvp-rm-helm-artifact> -f gvp-rm-values.yaml |

- <docker-repo> - Set to your Docker Repo with Private Edition Artifacts

- <credential-name> - Set to your pull secret name

## Global Parameters

## Add labels to all the deployed resources

##

labels:

enabled: true

serviceGroup: "gvp"

componentType: "shared"

## Primary App Configuration

##

# primaryApp:

# type: ReplicaSet

# Should include the defaults for replicas

deployment:

replicaCount: 2

deploymentEnv: "UPDATE_ENV"

namespace: gvp

clusterDomain: "svc.cluster.local"

nameOverride: ""

fullnameOverride: ""

image:

registry: <docker-repo>

gvprmrepository: gvp/gvp_rm

cfghandlerrepository: gvp/gvp_rm_cfghandler

snmprepository: gvp/gvp_snmp

gvprmtestrepository: gvp/gvp_rm_test

cfghandlertag:

rmtesttag:

rmtag:

snmptag: v9.0.040.07

pullPolicy: Always

imagePullSecrets:

- name: "<credential-name>"

dnsConfig:

options:

- name: ndots

value: "3"

# Pod termination grace period 15 mins.

gracePeriodSeconds: 900

## liveness and readiness probes

## !!! THESE OPTIONS SHOULD NOT BE CHANGED UNLESS INSTRUCTED BY GENESYS !!!

livenessValues:

path: /rm/liveness

initialDelaySeconds: 60

periodSeconds: 90

timeoutSeconds: 20

failureThreshold: 3

readinessValues:

path: /rm/readiness

initialDelaySeconds: 10

periodSeconds: 60

timeoutSeconds: 20

failureThreshold: 3

## PVCs defined

volumes:

billingpvc:

storageClass: managed-premium

claimSize: 20Gi

mountPath: "/rm"

## Define RM log storage volume type

rmLogStorage:

volumeType:

persistentVolume:

enabled: false

storageClass: disk-premium

claimSize: 50Gi

accessMode: ReadWriteOnce

hostPath:

enabled: true

path: /mnt/log

emptyDir:

enabled: false

containerMountPath:

path: /mnt/log

## FluentBit Settings

fluentBitSidecar:

enabled: false

## Define service(s) for application. Fields many need to be modified based on `type`

service:

type: ClusterIP

port: 5060

rmHealthCheckAPIPort: 8300

## ConfigMaps with Configuration

## Use Config Map for creating environment variables

context:

env:

cfghandler:

CFGSERVER: gvp-configserver.gvp.svc.cluster.local

CFGSERVERBACKUP: gvp-configserver.gvp.svc.cluster.local

CFGPORT: "8888"

CFGAPP: "default"

RMAPP: "azure_rm"

RMFOLDER: "Applications\\RM_MicroService\\RM_Apps"

HOSTFOLDER: "Hosts\\RM_MicroService"

MCPFOLDER: "MCP_Configuration_Unit\\MCP_LRG"

SNMPFOLDER: "Applications\\RM_MicroService\\SNMP_Apps"

EnvironmentType: "prod"

CONFSERVERAPP: "confserv"

RSAPP: "azure_rs"

SNMPAPP: "azure_rm_snmp"

STDOUT: "true"

VOICEMAILSERVICEDIDNUMBER: "55551111"

RMCONFIG:

rm:

sip-header-for-dnis: "Request-Uri"

ignore-gw-lrg-configuration: "true"

ignore-ruri-tenant-dbid: "true"

log:

verbose: "trace"

subscription:

sip.transport.dnsharouting: "true"

sip.headerutf8verification: "false"

sip.transport.setuptimer.tcp: "5000"

sip.threadpoolsize: "1"

registrar:

sip.transport.dnsharouting: "true"

sip.headerutf8verification: "false"

sip.transport.setuptimer.tcp: "5000"

sip.threadpoolsize: "1"

proxy:

sip.transport.dnsharouting: "true"

sip.headerutf8verification: "false"

sip.transport.setuptimer.tcp: "5000"

sip.threadpoolsize: "16"

sip.maxtcpconnections: "1000"

monitor:

sip.transport.dnsharouting: "true"

sip.maxtcpconnections: "1000"

sip.headerutf8verification: "false"

sip.transport.setuptimer.tcp: "5000"

sip.threadpoolsize: "1"

ems:

rc.cdr.local_queue_path: "/rm/ems/data/cdrQueue_rm.db"

rc.ors.local_queue_path: "/rm/ems/data/orsQueue_rm.db"

# Default secrets storage to k8s secrets with csi able to be optional

secret:

# keyVaultSecret will be a flag to between secret types(k8's or CSI). If keyVaultSecret was set to false k8's secret will be used

keyVaultSecret: false

#If keyVaultSecret set to false the below parameters will not be used.

configserverProviderClassName: gvp-configserver-secret

cfgSecretFileNameForCfgUsername: configserver-username

cfgSecretFileNameForCfgPassword: configserver-password

#If keyVaultSecret set to true the below parameters will not be used.

cfgServerSecretName: configserver-secret

cfgSecretKeyNameForCfgUsername: username

cfgSecretKeyNameForCfgPassword: password

## Ingress configuration

ingress:

enabled: false

annotations: {}

# kubernetes.io/ingress.class: nginx

# kubernetes.io/tls-acme: "true"

paths: []

hosts:

- chart-example.local

tls: []

# - secretName: chart-example-tls

# hosts:

# - chart-example.local

networkPolicies:

enabled: false

sip:

serviceName: sipnode

## primaryAppresource requests and limits

## ref: http://kubernetes.io/docs/user-guide/compute-resources/

##

resourceForRM:

# We usually recommend not to specify default resources and to leave this as a conscious

# choice for the user. This also increases chances charts run on environments with little

# resources, such as Minikube. If you do want to specify resources, uncomment the following

# lines, adjust them as necessary, and remove the curly braces after 'resources:'.

requests:

memory: "1Gi"

cpu: "200m"

ephemeral-storage: "10Gi"

limits:

memory: "2Gi"

cpu: "250m"

resoueceForSnmp:

requests:

memory: "500Mi"

cpu: "100m"

limits:

memory: "1Gi"

cpu: "150m"

## primaryApp containers' Security Context

## ref: https://kubernetes.io/docs/tasks/configure-pod-container/security-context/#set-the-security-context-for-a-container

##

## Containers should run as genesys user and cannot use elevated permissions

securityContext:

fsGroup: 500

runAsNonRoot: true

runAsUserRM: 500

runAsGroupRM: 500

runAsUserCfghandler: 500

runAsGroupCfghandler: 500

## Priority Class

## ref: https://kubernetes.io/docs/concepts/configuration/pod-priority-preemption/

##

priorityClassName: ""

## Affinity for assignment.

## Ref: https://kubernetes.io/docs/concepts/configuration/assign-pod-node/#affinity-and-anti-affinity

##

affinity:

## Node labels for assignment.

## ref: https://kubernetes.io/docs/user-guide/node-selection/

##

nodeSelector:

## Tolerations for assignment.

## ref: https://kubernetes.io/docs/concepts/configuration/taint-and-toleration/

##

tolerations: []

## Service/Pod Monitoring Settings

prometheus:

enabled: true

metric:

port: 9116

# Enable for Prometheus operator

podMonitor:

enabled: true

metric:

path: /snmp

module: [ if_mib ]

target: [ 127.0.0.1:1161 ]

monitoring:

prometheusRulesEnabled: true

grafanaEnabled: true

monitor:

monitorName: gvp-monitoring

|

Verify the deployed resources

Verify the deployed resources from OpenShift console/CLI.

Deployment validation - success

1. Log into console and check if gvp-rm-0 and gvp-rm-1 pods are ready and running "oc get pods -o wide".2. Do a pod describe and check if both liveness and readiness probes are passing "oc describe gvp-rm-0 / oc describe pod gvp-rm-1"3. LRG options configured by Resource Manager could be changed by using LRG configuration section in values.yaml. For example,

LRGConfig:

gvp.lrg:

load-balance-scheme: "round-robin"

4. When Resource Manager is deployed, it creates LRG "MCP_Configuration_Unit\\MCP_LRG", configuration unit, default IVR Profiles in the environment tenant.

For information on provisioning a new tenant, refer to Provision Genesys Voice Platform.

Resource Manager uses gvp-tenant-id, the contact center ID, and the media service type coming from SIP Server in the INVITE message to identify the tenant and pick the IVR Profiles.

Deployment validation - failure

To debug deployment failure, do the following:

1. Log into console and check if gvp-rm-0 and gvp-rm-1 pods are ready and running "oc get pods -o wide".

2. If the RM container is continuously restarting, check the liveness and readiness probe status.

3. Do RM pod describe to check the liveness and readiness probe status "oc describe gvp-rm-0 / oc describe pod gvp-rm-1"

4. If probe failures are observed, check for MCP availability and MCP applications in RM LRG. Check RM logs to find the root cause.

5. If rm-init container is failing, check RM and Configuration Server connectivity, and check whether RM configuration details are properly configured.

5. GVP Media Control Platform

Persistent Volumes creation

Note: The steps for PV creation can be skipped if OCS is used to auto provision the persistent volumes.

Create the following PVs which are required for the service deployment.

| If your OpenShift deployment is capable of self-provisioning of Persistent Volumes, then this step can be skipped. Volumes will be created by provisioner. |

apiVersion: v1

kind: PersistentVolume

metadata:

name: gvp-mcp-logs-01

spec:

capacity:

storage: 10Gi

accessModes:

- ReadWriteOnce

persistentVolumeReclaimPolicy: Recycle

storageClassName: gvp

nfs:

path: /export/vol1/PAT/gvp/mcp-logs-01

server: 192.168.30.51

|

oc create -f gvp-mcp-logs-01-pv.yaml |

apiVersion: v1

kind: PersistentVolume

metadata:

name: gvp-mcp-logs-02

spec:

capacity:

storage: 10Gi

accessModes:

- ReadWriteOnce

persistentVolumeReclaimPolicy: Recycle

storageClassName: gvp

nfs:

path: /export/vol1/PAT/gvp/mcp-logs-02

server: 192.168.30.51

|

oc create -f gvp-mcp-logs-02-pv.yaml |

apiVersion: v1

kind: PersistentVolume

metadata:

name: gvp-mcp-rup-volume-01

spec:

capacity:

storage: 40Gi

accessModes:

- ReadWriteOnce

persistentVolumeReclaimPolicy: Recycle

storageClassName: disk-premium

nfs:

path: /export/vol1/PAT/gvp/mcp-logs-01

server: 192.168.30.51

|

oc create -f gvp-mcp-rup-volume-01-pv.yaml |

apiVersion: v1

kind: PersistentVolume

metadata:

name: gvp-mcp-rup-volume-02

spec:

capacity:

storage: 40Gi

accessModes:

- ReadWriteOnce

persistentVolumeReclaimPolicy: Recycle

storageClassName: disk-premium

nfs:

path: /export/vol1/PAT/gvp/mcp-logs-02

server: 192.168.30.51

|

oc create -f gvp-mcp-rup-volume-02-pv.yaml |

apiVersion: v1

kind: PersistentVolume

metadata:

name: gvp-mcp-recording-volume-01

spec:

capacity:

storage: 40Gi

accessModes:

- ReadWriteOnce

persistentVolumeReclaimPolicy: Recycle

storageClassName: gvp

nfs:

path: /export/vol1/PAT/gvp/mcp-logs-01

server: 192.168.30.51

|

oc create -f gvp-mcp-recordings-volume-01-pv.yaml |

apiVersion: v1

kind: PersistentVolume

metadata:

name: gvp-mcp-recording-volume-02

spec:

capacity:

storage: 40Gi

accessModes:

- ReadWriteOnce

persistentVolumeReclaimPolicy: Recycle

storageClassName: gvp

nfs:

path: /export/vol1/PAT/gvp/mcp-logs-02

server: 192.168.30.51

|

oc create -f gvp-mcp-recordings-volume-02-pv.yaml |

Install Helm chart

Download the required Helm chart release from the JFrog repository and install. Refer to Helm Chart URLs.

helm install gvp-mcp-blue ./<gvp-mcp-helm-artifact> -f gvp-mcp-values.yaml |

- <critical-priority-class> - Set to a priority class that exists on cluster (or create it instead)

- <docker-repo> - Set to your Docker Repo with Private Edition Artifacts

- <credential-name> - Set to your pull secret name

- Set logicalResourceGroup: "MCP_Configuration_Unit" to add MCPs to the Real Configuration Unit (rather than test)

## Default values for gvp-mcp.

## This is a YAML-formatted file.

## Declare variables to be passed into your templates.

## Global Parameters

## Add labels to all the deployed resources

##

podLabels: {}

## Add annotations to all the deployed resources

##

podAnnotations: {}

serviceAccount:

# Specifies whether a service account should be created

create: false

# Annotations to add to the service account

annotations: {}

# The name of the service account to use.

# If not set and create is true, a name is generated using the fullname template

name:

## Deployment Configuration

deploymentEnv: "UPDATE_ENV"

replicaCount: 2

terminationGracePeriod: 3600

## Name and dashboard overrides

nameOverride: ""

fullnameOverride: ""

dashboardReplicaStatefulsetFilterOverride: ""

## Base Labels. Please do not change these.

serviceName: gvp-mcp

component: shared

partOf: gvp

## Command-line arguments to the MCP process

args:

- "gvp-configserver"

- "8888"

- "default"

- "/etc/mcpconfig/config.ini"

## Container image repo settings.

image:

mcp:

registry: <docker-repo>

repository: gvp/multicloud/gvp_mcp

tag: "{{ .Chart.AppVersion }}"

pullPolicy: IfNotPresent

serviceHandler:

registry: <docker-repo>

repository: gvp/multicloud/gvp_mcp_servicehandler

tag: "{{ .Chart.AppVersion }}"

pullPolicy: IfNotPresent

configHandler:

registry: <docker-repo>

repository: gvp/multicloud/gvp_mcp_confighandler

tag: "{{ .Chart.AppVersion }}"

pullPolicy: IfNotPresent

snmp:

registry: <docker-repo>

repository: gvp/multicloud/gvp_snmp

tag: v9.0.040.21

pullPolicy: IfNotPresent

rup:

registry: <docker-repo>

repository: cce/recording-provider

tag: 9.0.000.00.b.1432.r.ef30441

pullPolicy: IfNotPresent

## MCP specific settings

mcp:

## Settings for liveness and readiness probes of MCP

## !!! THESE VALUES SHOULD NOT BE CHANGED UNLESS INSTRUCTED BY GENESYS !!!

livenessValues:

path: /mcp/liveness

initialDelaySeconds: 30

periodSeconds: 60

timeoutSeconds: 20

failureThreshold: 3

healthCheckAPIPort: 8300

# Used instead of startupProbe. This runs all initial self-tests, and could take some time.

# Timeout is < 1 minute (with reduced test set), and interval/period is 1 minute.

readinessValues:

path: /mcp/readiness

initialDelaySeconds: 30

periodSeconds: 60

timeoutSeconds: 50

failureThreshold: 3

healthCheckAPIPort: 8300

# Location of configuration file for MCP

# initialConfigFile is the default template

# finalConfigFile is the final configuration after overrides are applied (see mcpConfig section for overrides)

initialConfigFile: "/etc/config/config.ini"

finalConfigFile: "/etc/mcpconfig/config.ini"

# Dev and QA deployments will use MCP_Configuration_Unit_Test LRG and shared deployments will use MCP_Configuration_Unit LRG

logicalResourceGroup: "MCP_Configuration_Unit"

# Threshold values for the various alerts in podmonitor.

alerts:

cpuUtilizationAlertLimit: 70

memUtilizationAlertLimit: 90

workingMemAlertLimit: 7

maxRestarts: 2

persistentVolume: 20

serviceHealth: 40

recordingError: 7

configServerFailure: 0

dtmfError: 1

dnsError: 6

totalError: 120

selfTestError: 25

fetchErrorMin: 120

fetchErrorMax: 220

execError: 120

sdpParseError: 1

mediaWarning: 3

mediaCritical: 7

fetchTimeout: 10

fetchError: 10

ngiError: 12

ngi4xx: 10

recPostError: 7

recOpenError: 1

recStartError: 3

recCertError: 7

reportingDbInitError: 1

reportingFlushError: 1

grammarLoadError: 1

grammarSynError: 1

dtmfGrammarLoadError: 1

dtmfGrammarError: 1

vrmOpenSessError: 1

wsTokenCreateError: 1

wsTokenConfigError: 1

wsTokenFetchError: 1

wsOpenSessError: 1

wsProtoError: 1

grpcConfigError: 1

grpcSSLRootCertError: 1

grpcGoogleCredentialError: 1

grpcRecognizeStartError: 7

grpcWriteError: 7

grpcRecognizeError: 7

grpcTtsError: 7

streamerOpenSessionError: 1

streamerProtocolError: 1

msmlReqError: 7

dnsResError: 6

rsConnError: 150

## RUP (Recording Uploader) Settings

rup:

## Settings for liveness and readiness probes of RUP

## !!! THESE VALUES SHOULD NOT BE CHANGED UNLESS INSTRUCTED BY GENESYS !!!

livenessValues:

path: /health/live

initialDelaySeconds: 30

periodSeconds: 60

timeoutSeconds: 20

failureThreshold: 3

healthCheckAPIPort: 8080

readinessValues:

path: /health/ready

initialDelaySeconds: 30

periodSeconds: 30

timeoutSeconds: 20

failureThreshold: 3

healthCheckAPIPort: 8080

## RUP PVC defines

rupVolume:

storageClass: "genesys"

accessModes: "ReadWriteOnce"

volumeSize: 40Gi

## Other settings for RUP

recordingsFolder: "/pvolume/recordings"

recordingsCache: "/pvolume/recording_cache"

rupProvisionerEnabled: "false"

decommisionDestType: "WebDAV"

decommisionDestWebdavUrl: "http://gvp-central-rup:8180"

decommisionDestWebdavUsername: ""

decommisionDestWebdavPassword: ""

diskFullDestType: "WebDAV"

diskFullDestWebdavUrl: "http://gvp-central-rup:8180"

diskFullDestWebdavUsername: ""

diskFullDestWebdavPassword: ""

cpUrl: "http://cce-conversation-provider.cce.svc.cluster.local"

unrecoverableLostAction: "uploadtodefault"

unrecoverableDestType: "Azure"

unrecoverableDestAzureAccountName: "gvpwestus2dev"

unrecoverableDestAzureContainerName: "ccerp-unrecoverable"

logJsonEnable: true

logLevel: INFO

logConsoleLevel: INFO

## RUP resource requests and limits

## ref: http://kubernetes.io/docs/user-guide/compute-resources/

resources:

requests:

memory: "128Mi"

cpu: "100m"

ephemeral-storage: "1Gi"

limits:

memory: "2Gi"

cpu: "1000m"

## PVCs defined. RUP one is under "rup" label.

recordingStorage:

storageClass: "genesys"

accessModes: "ReadWriteOnce"

volumeSize: 40Gi

## MCP log storage volume types.

mcpLogStorage:

volumeType:

persistentVolume:

enabled: false

storageClass: disk-premium

volumeSize: 50Gi

accessModes: ReadWriteOnce

hostPath:

enabled: true

path: /mnt/log

emptyDir:

enabled: false

## FluentBit Settings

fluentBitSidecar:

enabled: false

## Service Handler configuration. Note, the port values CANNOT be changed here alone, and should not be changed.

serviceHandler:

serviceHandlerPort: 8300

mcpSipPort: 5070

consuleExternalHost: ""

consulPort: 8501

registrationInterval: 10000

mcpHealthCheckInterval: 30s

mcpHealthCheckTimeout: 10s

## Config Server values passed to RUP, etc. These should not be changed.

configServer:

host: gvp-configserver

port: "8888"

## Secrets storage related settings - k8s secrets or csi

secrets:

# Used for pulling images/containers from the respositories.

imagePull:

- name: <credential-name>

# Config Server secrets. If k8s is false, csi will be used, else k8s will be used.

configServer:

k8s: true

secretName: configserver-secret

dbUserKey: username

dbPasswordKey: password

csiSecretProviderClass: keyvault-gvp-gvp-configserver-secret

# Consul secrets. If k8s is false, csi will be used, else k8s will be used.

consul:

k8s: true

secretName: shared-consul-consul-gvp-token

secretKey: consul-consul-gvp-token

csiSecretProviderClass: keyvault-consul-consul-gvp-token

## Ingress configuration

ingress:

enabled: false

annotations: {}

# kubernetes.io/ingress.class: nginx

# kubernetes.io/tls-acme: "true"

hosts:

- host: chart-example.local

paths: []

tls: []

# - secretName: chart-example-tls

# hosts:

# - chart-example.local

## App resource requests and limits

## ref: http://kubernetes.io/docs/user-guide/compute-resources/

## Values for MCP, and the default ones for other containers. RUP ones are under "rup" label.

##

resourcesMcp:

requests:

memory: "200Mi"

cpu: "250m"

ephemeral-storage: "1Gi"

limits:

memory: "2Gi"

cpu: "300m"

resourcesDefault:

requests:

memory: "128Mi"

cpu: "100m"

limits:

memory: "128Mi"

cpu: "100m"

# We usually recommend not to specify default resources and to leave this as a conscious

# choice for the user. This also increases chances charts run on environments with little

# resources, such as Minikube. If you do want to specify resources, uncomment the following

# lines, adjust them as necessary, and remove the curly braces after 'resources:'.

# limits:

# cpu: 100m

# memory: 128Mi

# requests:

# cpu: 100m

# memory: 128Mi

## App containers' Security Context

## ref: https://kubernetes.io/docs/tasks/configure-pod-container/security-context/#set-the-security-context-for-a-container

##

## Containers should run as genesys user and cannot use elevated permissions

## Pod level security context

podSecurityContext:

fsGroup: 500

runAsUser: 500

runAsGroup: 500

runAsNonRoot: true

## Container security context

securityContext:

# fsGroup: 500

runAsUser: 500

runAsGroup: 500

runAsNonRoot: true

## Priority Class

## ref: https://kubernetes.io/docs/concepts/configuration/pod-priority-preemption/

## NOTE: this is an optional parameter

##

priorityClassName: <critical-priority-class>

# affinity: {}

## Node labels for assignment.

## ref: https://kubernetes.io/docs/user-guide/node-selection/

##

nodeSelector:

#genesysengage.com/nodepool: realtime

## Tolerations for assignment.

## ref: https://kubernetes.io/docs/concepts/configuration/taint-and-toleration/

##

tolerations: []

# - key: "kubernetes.azure.com/scalesetpriority"

# operator: "Equal"

# value: "spot"

# effect: "NoSchedule"

# - key: "k8s.genesysengage.com/nodepool"

# operator: "Equal"

# value: "compute"

# effect: "NoSchedule"

# - key: "kubernetes.azure.com/scalesetpriority"

# operator: "Equal"

# value: "compute"

# effect: "NoSchedule"

#- key: "k8s.genesysengage.com/nodepool"

# operator: Exists

# effect: NoSchedule

## Extra labels

## ref: https://kubernetes.io/docs/concepts/overview/working-with-objects/labels/

##

## Use podLabels

#labels: {}

## Extra Annotations

## ref: https://kubernetes.io/docs/concepts/overview/working-with-objects/annotations/

##

## Use podAnnotations

#annotations: {}

## Autoscaling Settings

## Keda can be used as an alternative to HPA only to scale MCPs based on a cron schedule (UTC).

## If this is set to true, use Keda for scaling, or use HPA directly.

useKeda: true

## If Keda is enabled, only the following parameters are supported, and default HPA settings

## will be used within Keda.

keda:

preScaleStart: "0 14 * * *"

preScaleEnd: "0 2 * * *"

preScaleDesiredReplicas: 4

pollingInterval: 15

cooldownPeriod: 300

## HPA Settings

# GVP-42512: PDB issue

# Alaways keep the following:

# minReplicas >= 2

# maxUnavailable = 1

hpa:

enabled: false

minReplicas: 2

maxUnavailable: 1

maxReplicas: 4

podManagementPolicy: Parallel

targetCPUAverageUtilization: 20

scaleupPeriod: 15

scaleupPods: 4

scaleupPercent: 50

scaleupStabilizationWindow: 0

scaleupPolicy: Max

scaledownPeriod: 300

scaledownPods: 2

scaledownPercent: 10

scaledownStabilizationWindow: 3600

scaledownPolicy: Min

## Service/Pod Monitoring Settings

prometheus:

mcp:

name: gvp-mcp-snmp

port: 9116

rup:

name: gvp-mcp-rup

port: 8080

podMonitor:

enabled: true

grafana:

enabled: false

#log:

# name: gvp-mcp-log

# port: 8200

## Pod Disruption Budget Settings

podDisruptionBudget:

enabled: true

## Enable network policies or not

networkPolicies:

enabled: false

## DNS configuration options

dnsConfig:

options:

- name: ndots

value: "3"

## Configuration overrides

mcpConfig:

# MCP config overrides

mcp.mpc.numdispatchthreads: 4

mcp.log.verbose: "interaction"

mcp.mpc.codec: "pcmu pcma telephone-event"

mcp.mpc.transcoders: "PCM MP3"

mcp.mpc.playcache.enable: 1

mcp.fm.http_proxy: ""

mcp.fm.https_proxy: ""

# Nexus client configuration.

# !!! Other than pool.size and resource.uri, no other parameter should be changed. !!!

nexus_asr1.pool.size: 0

nexus_asr1.provision.vrm.client.resource.uri: "[\"ws://nexus-production.nexus.svc.cluster.local/nexus/v3/bot/connection\"]"

nexus_asr1.provision.vrm.client.resource.type: "ASR"

nexus_asr1.provision.vrm.client.resource.name: "ASRC"

nexus_asr1.provision.vrm.client.resource.engines: "nexus"

nexus_asr1.provision.vrm.client.resource.engine.nexus.audio.codec: "mulaw"

nexus_asr1.provision.vrm.client.resource.engine.nexus.audio.samplerate: 8000

nexus_asr1.provision.vrm.client.resource.engine.nexus.enableMaxSpeechTimeout: true

nexus_asr1.provision.vrm.client.resource.engine.nexus.serviceAccountKey: ""

nexus_asr1.provision.vrm.client.resource.privatekey: ""

nexus_asr1.provision.vrm.client.resource.proxy: ""

nexus_asr1.provision.vrm.client.TransportProtocol: "WEBSOCKET"

|