Kubernetes-supported structured logging

From Genesys Documentation

This topic is part of the manual Operations for version Current of Genesys Multicloud CX Private Edition.

Contents

A secondary method of logging required for standard stdout/stderr structured logging.

Related documentation:

RSS:

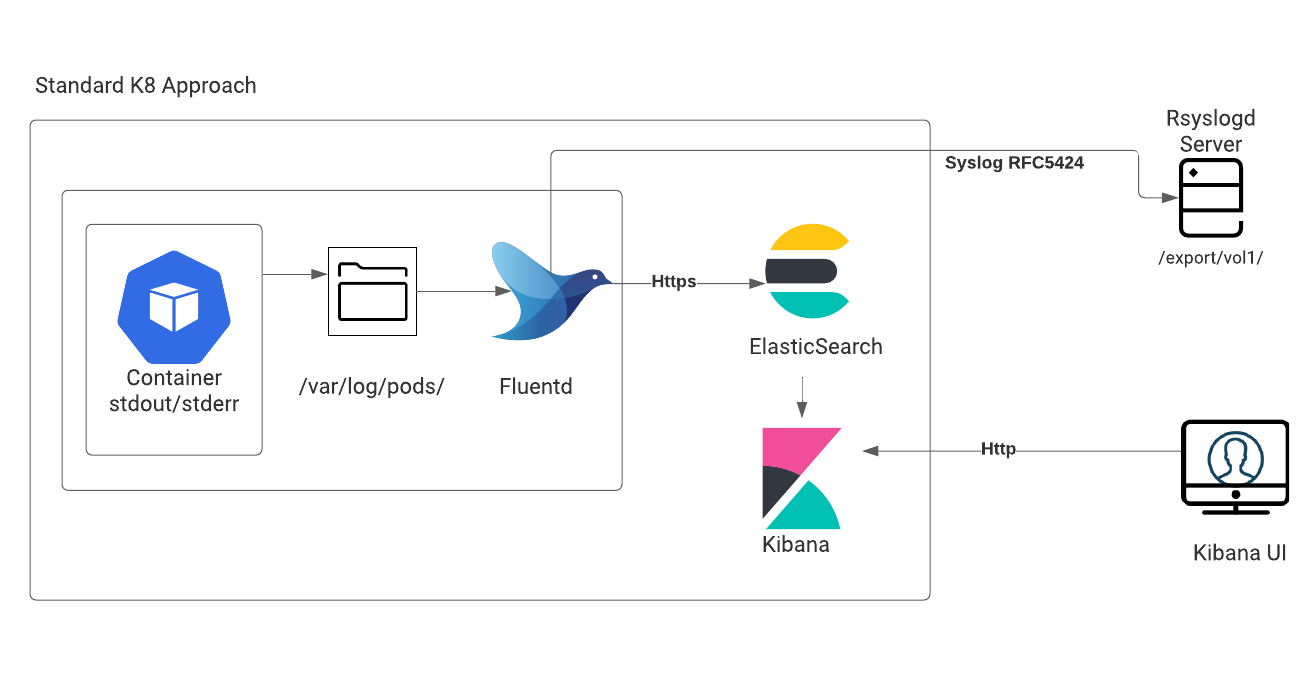

This logging method that is required for standard stdout/stderr structured logs that are generated by containers within the Kubernetes environment. Therefore, this method is also called Kubernetes-supported logging. Here, the container is writes stdout/stderr logs to a – var/log/containers directory.

You will be given the option to choose the external log aggregator to implement the aggregation.

Services that use Kubernetes structured logging:

- Genesys Authentication

- Web Services and Applications

- Genesys Engagement Services

- Designer

Important

Some services (such as Genesys Info Mart) use the Kubernetes logging approach with an exception that the logs are written in an unstructured format.GKE logging

Click here for details about GKE logging.

Comments or questions about this documentation? Contact us for support!